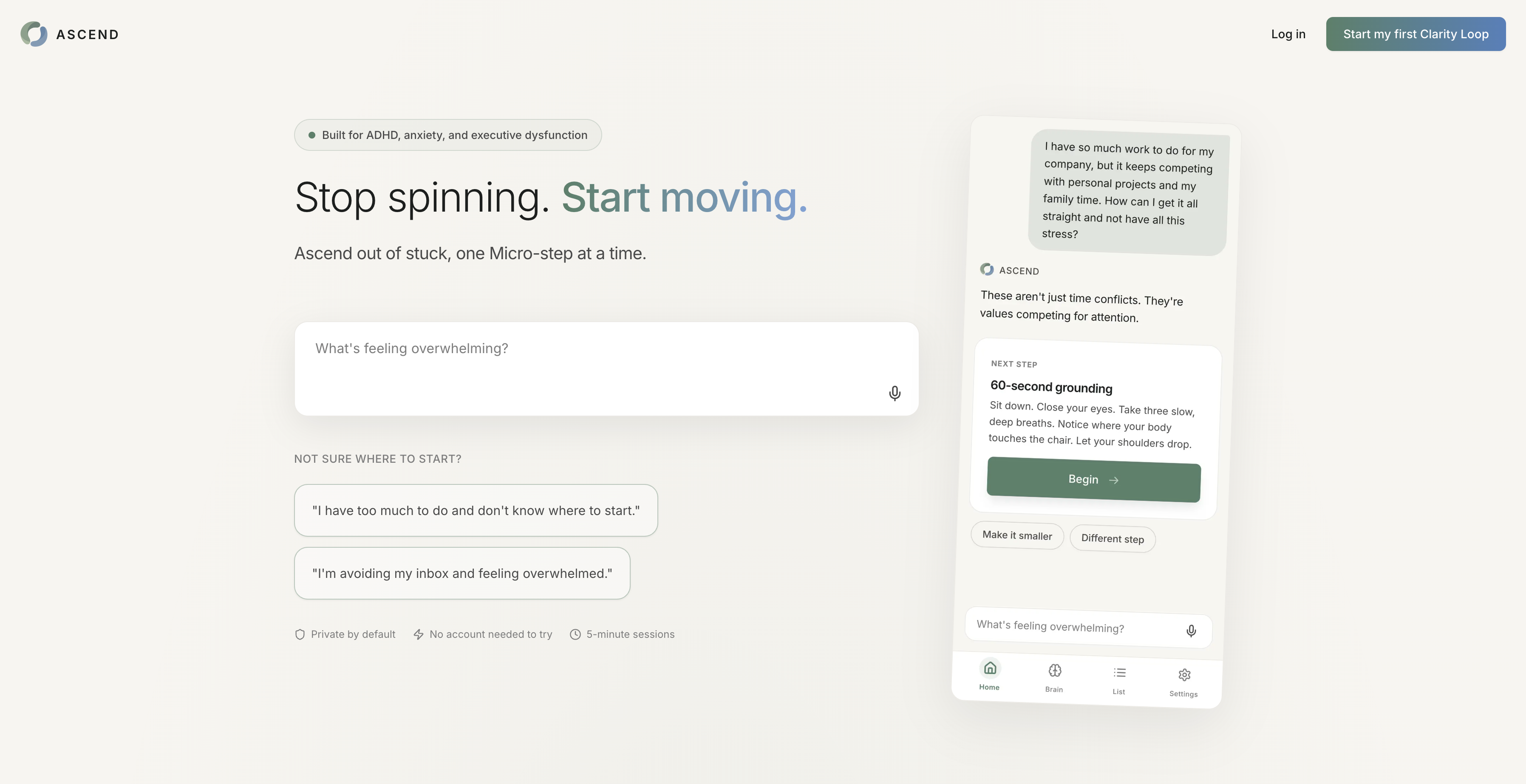

Ascend · Solo design + build · 2026

A nervous-system regulation app for executive-dysfunction freeze. Shipped solo in 10 weeks.

An AI-native PWA that detects when a user has stalled and quietly offers a smaller next move. Built end-to-end in 10 weeks, solo.

The system

A decision engine, not a chatbot

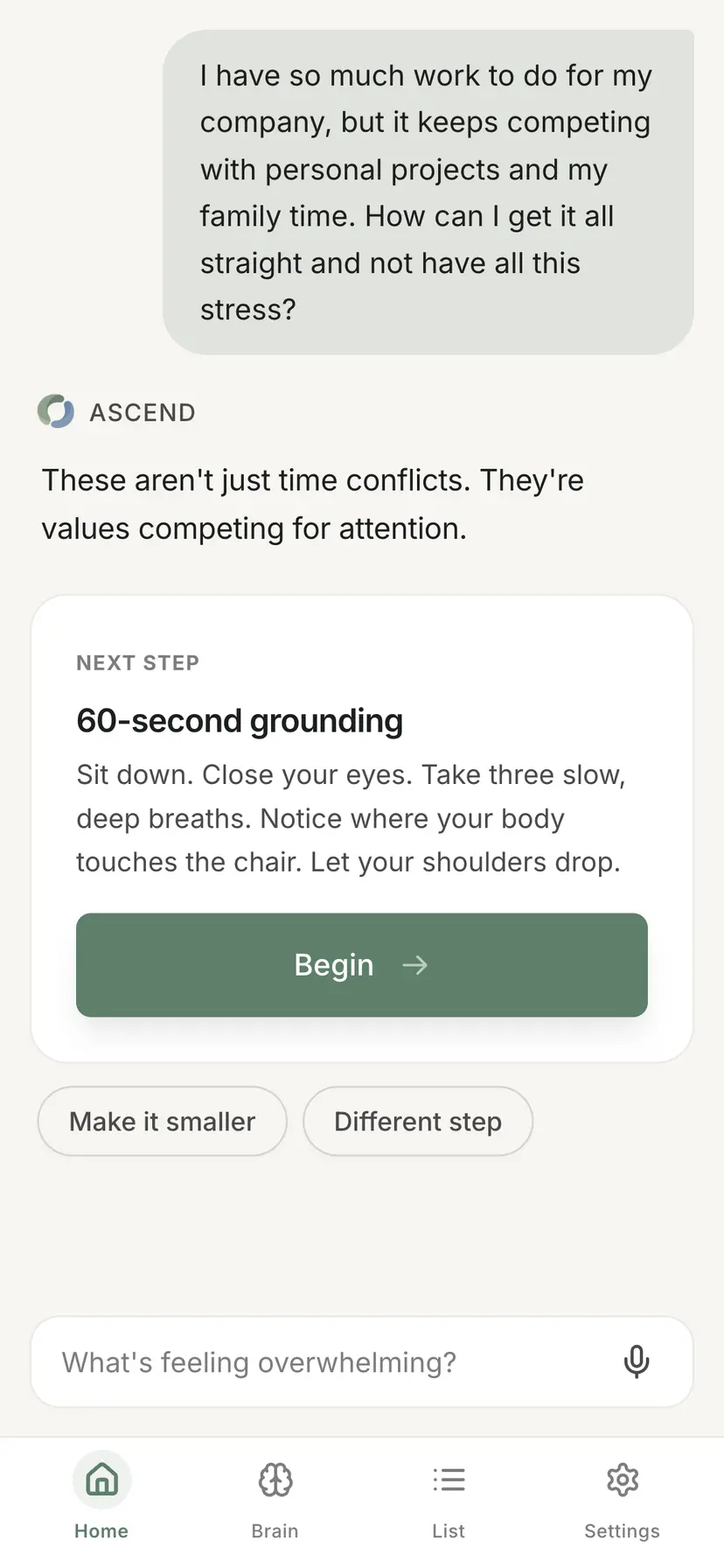

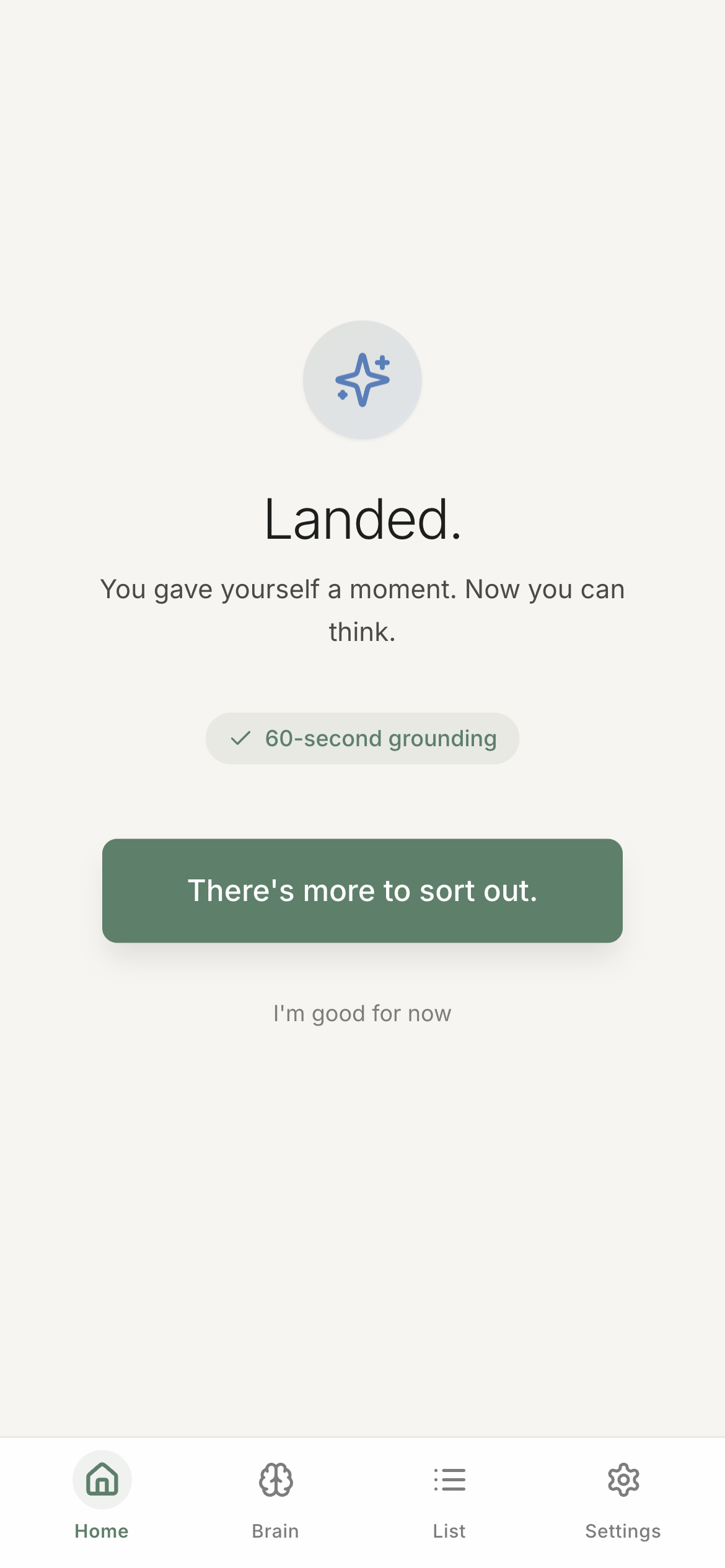

Ascend's core is not a chat interface. It's a Clarity Loop that runs every session (Witness → Micro-step → Execute) wrapped around a decision engine that picks an intervention type before the model writes a word.

Inputs (voice or text) → state inference (arousal, clarity, actionability, recent outcomes) → intervention selection with recency penalties → prompt assembly → Claude response → session memory update

A single conversational agent with one personality fails the core use case. A user who needs to regulate first does not want a micro-step. A user with low clarity does not want a reframe. The engine routes the conversation to the right mode before generating language.

The five intervention types

- Grounding – settle the nervous system first (high arousal, low actionability)

- Micro-step – the smallest possible first action (user stuck on initiation)

- Bridge – reframe what the real problem is when clarity is low

- Momentum – a familiar sequence the user already trusts (low energy, decision fatigue)

- Completion / Permission – explicit permission to stop, defer, or do less when guilt is the load-bearing problem

Transferable: the adaptive-intervention pattern, progressive disclosure under cognitive load, and flat-domain organization (instead of nested hierarchy) apply directly to LLM config surfaces, feature flag rules, observability dashboards, agent builders. Anywhere users freeze under complexity.

The problem

Lists are the trigger, not the solution

Traditional productivity tools assume the user knows where to start and just needs better organization. For people with ADHD, burnout, or high-anxiety roles, the 50-task backlog is itself the trigger. Every UI element becomes a decision point, and decision load in a dysregulated state produces shutdown, not action.

The real problem is nervous-system dysregulation triggered by cognitive overload, not task management.

Competitive gap

| Tool | Strength | Why it falls short |

|---|---|---|

| Todoist / Things | Clean task management | Lists cause paralysis, not momentum |

| Notion | Flexible, powerful | Complexity becomes its own project |

| Structured | Time-blocking | Requires knowing what to do when |

| Finch / Youper | Reflection-focused | No concrete actions |

| Goblin Tools | ADHD-friendly breakdown | No memory, no state, one-shot |

No existing tool combined witnessing (reflect, validate) with one concrete action while actively working to regulate the user's state before prescribing work.

Three principles that drove the design

The non-negotiables

One action at a time. Non-negotiable.

Single-action output is the core product constraint, not a UI preference. The engine returns one primaryAction, with at most two refinement chips ("Make it smaller", "Different step") that re-run the loop rather than expanding the surface. The alternative I rejected: a ranked list of 3–5 suggestions. Faster to build, but it reproduces the trigger we exist to remove.

Witness before action

A variant that jumped straight to a micro-step tested as "not hearing me." Every response now opens with a Witness phase (situation, emotional tone, core pressure) before the engine surfaces an action.

Voice-first input

About 60% of Clarity Loops begin in voice. When overwhelmed, speaking is lower-friction than writing. Whisper API for transcription, with a text fallback. I considered keyboard-first because it's faster to build and ship; the regulation hypothesis required voice.

Failure modes

What happens when the model is wrong

A conversational AI product fails quietly if you don't design for what happens when the model is wrong, slow, or unavailable. Four failure classes, with explicit responses for each:

| Failure | What the user sees | Why |

|---|---|---|

| Whisper transcription latency (2–3s) | Animated waveform + "Transcribing…" | Silence reads as "broken"; movement reads as "working" |

| Engine picks the wrong intervention type | "Make it smaller" / "Different step" chips re-run the loop with adjusted state | Lets the user nudge the engine without surfacing the model |

| Free-tier limit reached (5 lifetime loops) | Soft upgrade prompt, with a compassionate bypass when arousal is high | Hard blocks trigger shame; a user mid-spiral needs the loop more, not less |

| Model repeats an intervention type | Engine de-weights that type next turn (recency penalty) | Repetition is the fastest trust killer in a coaching tone |

The compassionate bypass is the decision I'm proudest of. If arousalScore ≥ 0.7, the paywall is suppressed and generation runs anyway. The user in the worst state is the user least able to handle a billing wall, and a product whose thesis is zero-guilt cannot let revenue logic override that.

What I would not ship in this state: proactive push notifications. Getting a "you haven't opened Ascend in 3 days" notification from a product that exists to remove guilt would break the core promise. Push goes on the roadmap only when the notification can be state-aware (e.g., calendar-informed), not time-based nagging.

Reflection

What worked, what I'd do differently, when this approach is wrong

What worked

- Research drove the non-obvious calls. "One action at a time," voice-first, and zero-guilt were validated in interviews before I built them. I did not have to retrofit the product around user feedback because I designed from it.

- AI as thinking partner, not just executor. Claude Code helped me work through the recency-penalty algorithm and the Firestore composite-index design. I would have shipped a worse schema without it.

- Constraints forced focus. Solo, nights-and-weekends, no funding. This is why the product has 5 intervention types and not 15.

What I'd do differently

- Instrumentation from day one. I added analytics after the MVP shipped. I'm now backfilling a picture I could have had in real time. For any future project, eval and telemetry are part of the MVP, not a later phase.

- Willingness-to-pay before billing infrastructure. Built Stripe before validating that anyone would pay. A process error I'd avoid in a product engagement, where the team is doing that validation work in parallel.

- Larger initial beta. Twelve users gives direction but not confidence. 25–30 would have been better for the same cost of my time.

- An explicit "this didn't land" affordance. The refinement chips do part of this job, but a direct rejection signal would give the engine cleaner training data and the user a clearer release valve. It's the next UX bet.

When I wouldn't ship this approach

The adaptive-intervention pattern works when user state is inferable from a short input, and the cost of a wrong intervention is recoverable within the same session. I wouldn't apply it to a tool used during an active crisis. Ascend assumes a 5–10 minute window and a recoverable session; crisis support needs different patterns, escalation paths, and human handoff. The pattern is for coaching, not adjudication.

Methodology note. "Returned" = opened a second session within 14 days of first use. "Completed first action" = self-reported via in-app prompt at the end of the first Clarity Loop. Twelve users recruited from ADHD-focused communities; no incentive offered.

Status

On Ascend

Ascend is portfolio work. It's a real product, production-stable, customer-facing, available across desktop, native mobile, and PWA. Built to test a hypothesis and to demonstrate end-to-end designer-developer capability.

I maintain it on my own time and in small windows. I'm available for full-time senior, staff, or lead IC work. Ascend does not compete with that.