Cereba · Principal AI/UX Designer · 2024–2025

Same product, two architectures. Built it on Bubble. Rebuilt it Claude-native in under a month.

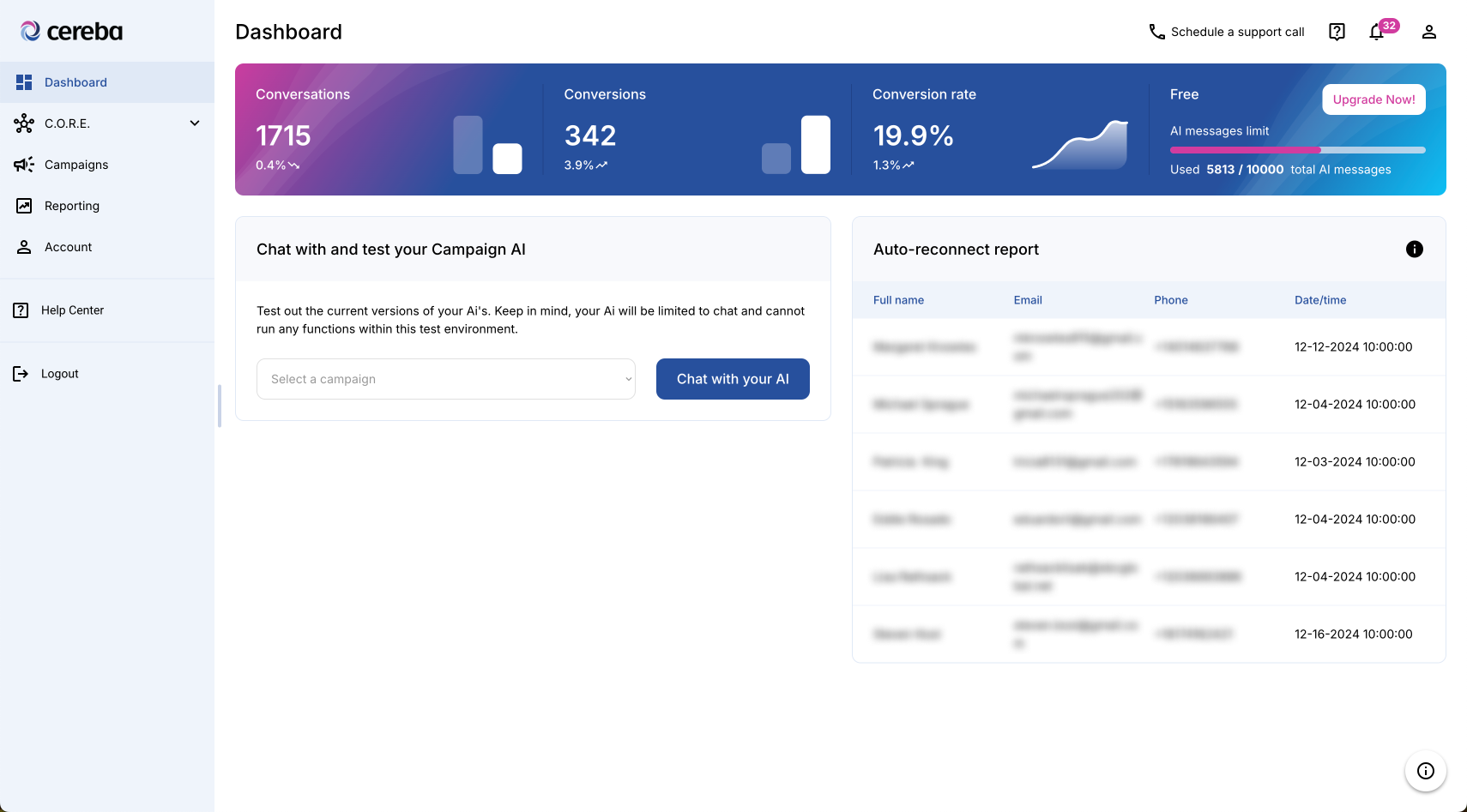

A self-building agent for service businesses. Qualification, booking, cancellation recovery, reactivation. V1 shipped on Bubble. V2 rebuilt Claude-native when the tools stopped being the limiter, with the GHL onboarding fixed end-to-end.

V1: The Bubble build

Designer-developer of one

I was the only designer at Cereba and also built the front-end. Designer-developer scope from day one, on a team that paired internal product and design (me + stakeholders) with external engineering, QA, and product partners.

What I owned

- End-to-end product and UX design: onboarding, campaign creation, analytics, dashboard

- Front-end build in Bubble.io. Every screen, every flow, every state

- Stripe integration, database wiring, and the connections between Bubble and the third-party services the product depended on

- PM-adjacent work: stakeholder discovery, prioritization, sprint cadence, and async reviews with the external engineering team

- The iteration loop after launch: onboarding metrics, user interviews, and the streamlining work that came out of both

V1 launched. Real users, real conversion data, real customers running real revenue ops on top of it. AI was already in the product, but the architecture wasn't built around modern LLMs. It was built around what Bubble could integrate with at the time.

The call to rebuild

When the platform is the limit, not the design

Claude's capability crossed a threshold that V1's architecture couldn't take advantage of. Multi-LLM support was structurally difficult on Bubble. Scalability was capped. The platform itself had become the constraint on how fast the product could improve.

Retrofitting AI onto V1 would have inherited V1's limitations. Rebuilding meant a clean architecture aimed at where the field was going.

The call was joint. The founding team and me. My case had four legs: UX (V1's onboarding metrics had been telling us what needed to change), stability (Bubble was strained at our scale), scalability (we needed headroom we weren't going to get on the existing stack), and speed of improvement (the cost of the rewrite was less than the cost of carrying V1's limits forward another year).

This wasn't "AI is hot, we need it." It was: the tools available now can do what V1 couldn't, and the architecture rewrite buys us forward velocity we cannot buy any other way.

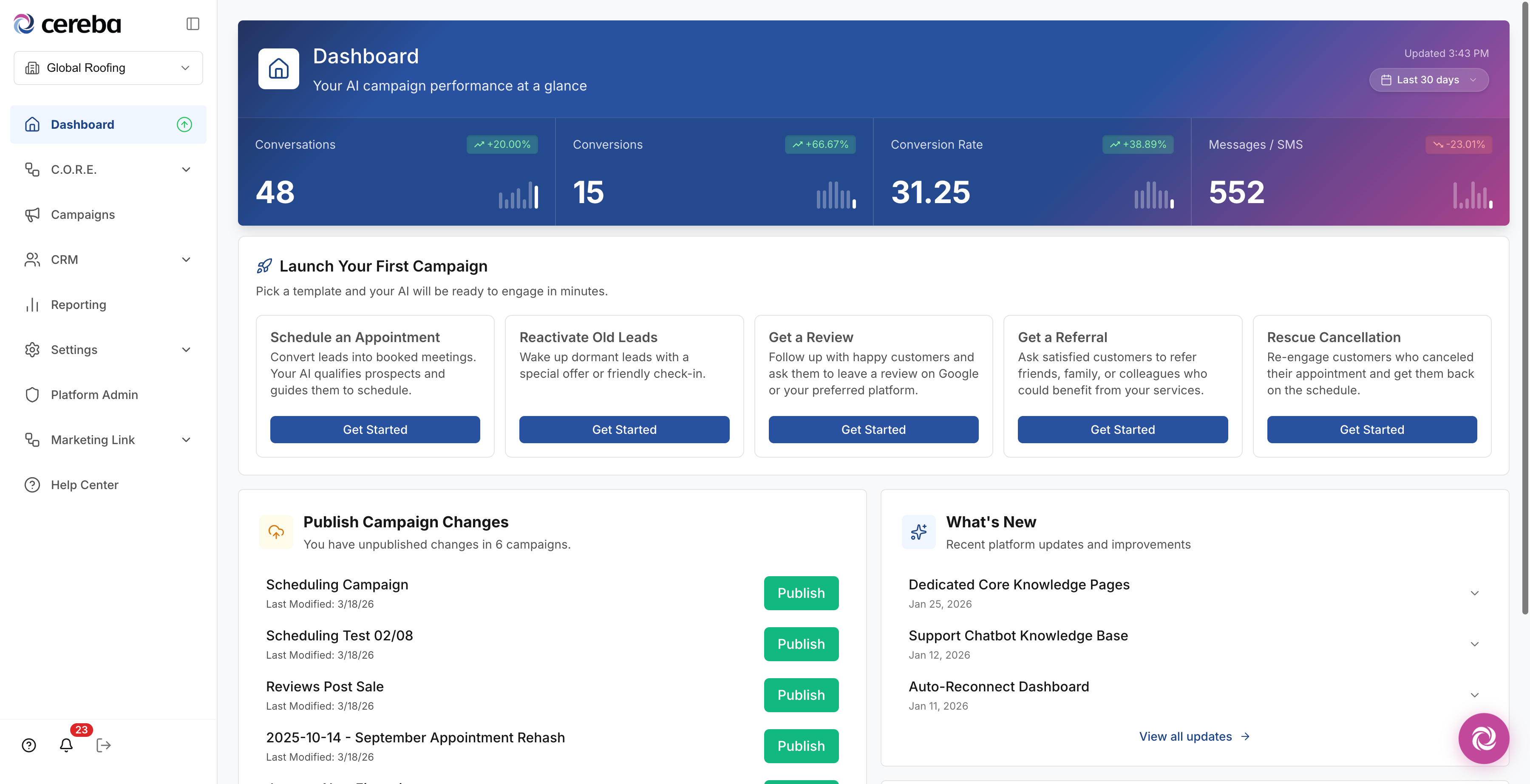

V2: The Claude-native rebuild

Under a month, end-to-end

Full rewrite. New architecture, new repo, all databases reconnected, all production data migrated. Front-end rebuilt from scratch. Visuals refreshed. Capability expanded. Claude could carry more weight than V1's stack, and the design stretched to use it. Marketing site rebuilt alongside.

Time, wall-clock: under one month.

How a one-designer rebuild ships in a month

This is where I developed the AI-as-thought-partner methodology that now runs across every project I work on, including Ascend. A Claude project and a GPT project as design partners, for inspiration, research synthesis, technical feasibility checks, and pattern audits. Tied directly to traditional UX practice: real user feedback from V1 drove every decision the AI helped me move on faster.

The AI didn't replace judgment; it accelerated the work around the judgment. I made the calls. Claude helped me get to the calls in hours instead of days.

What emerged here: C.O.R.E. Intent

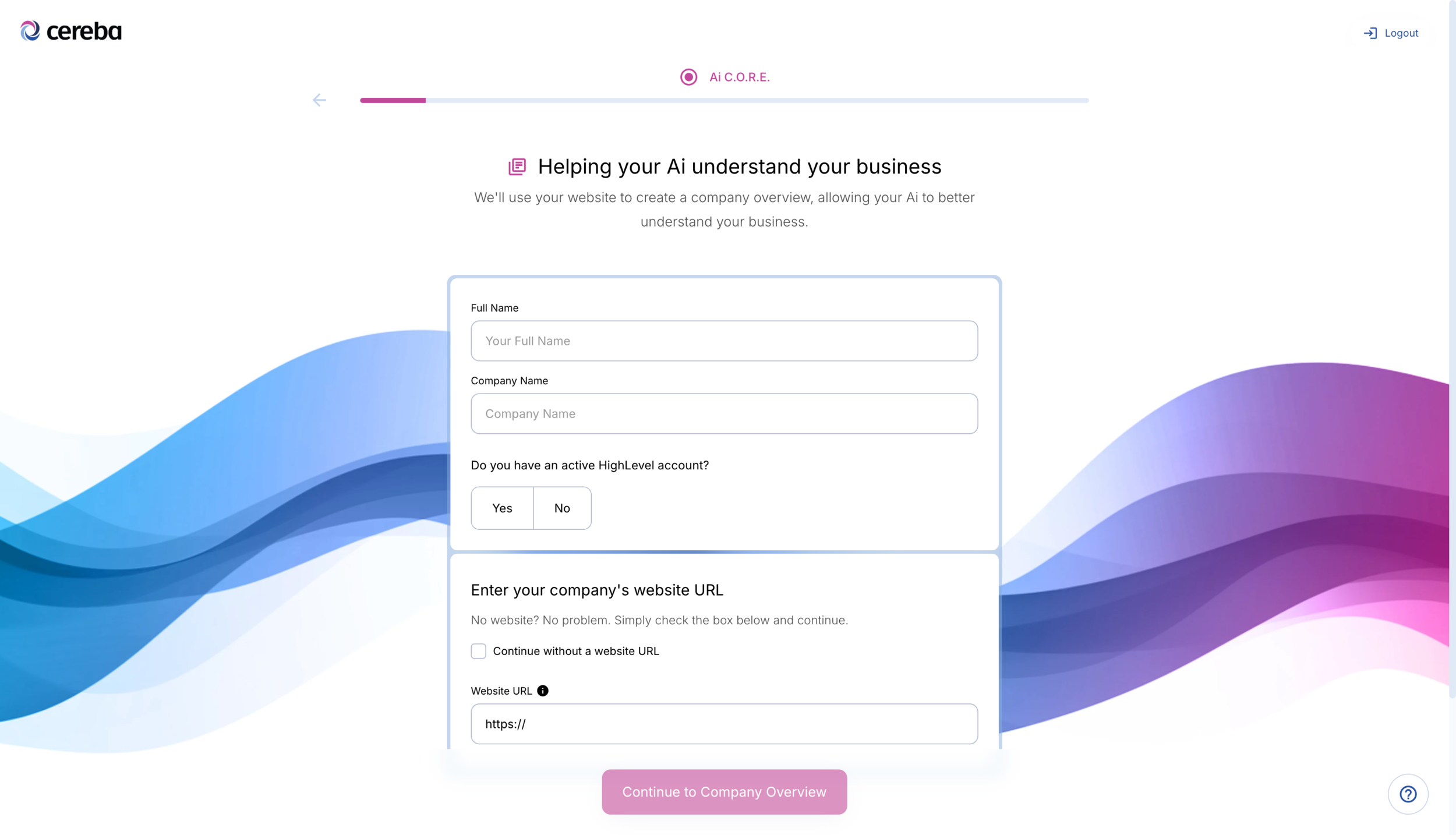

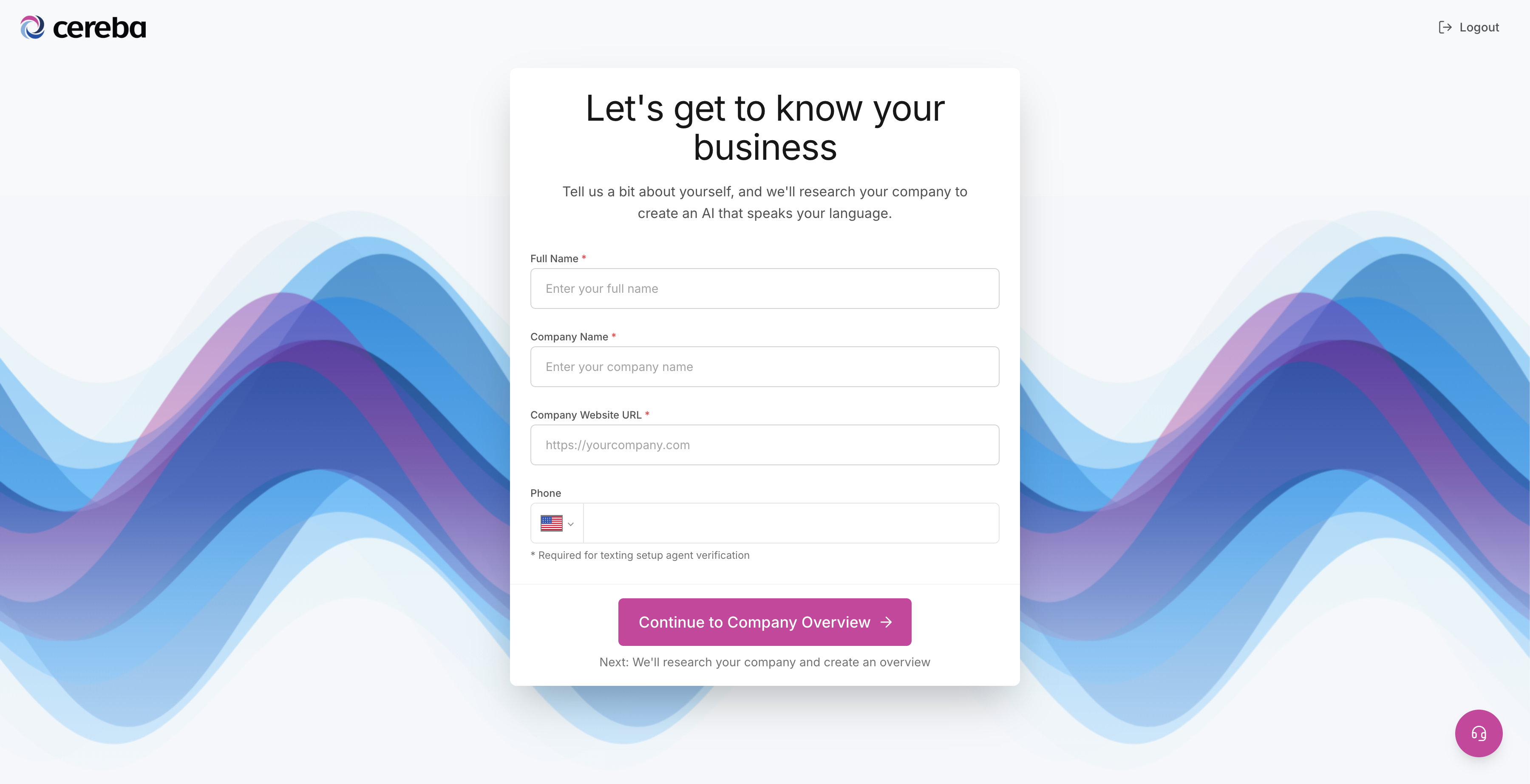

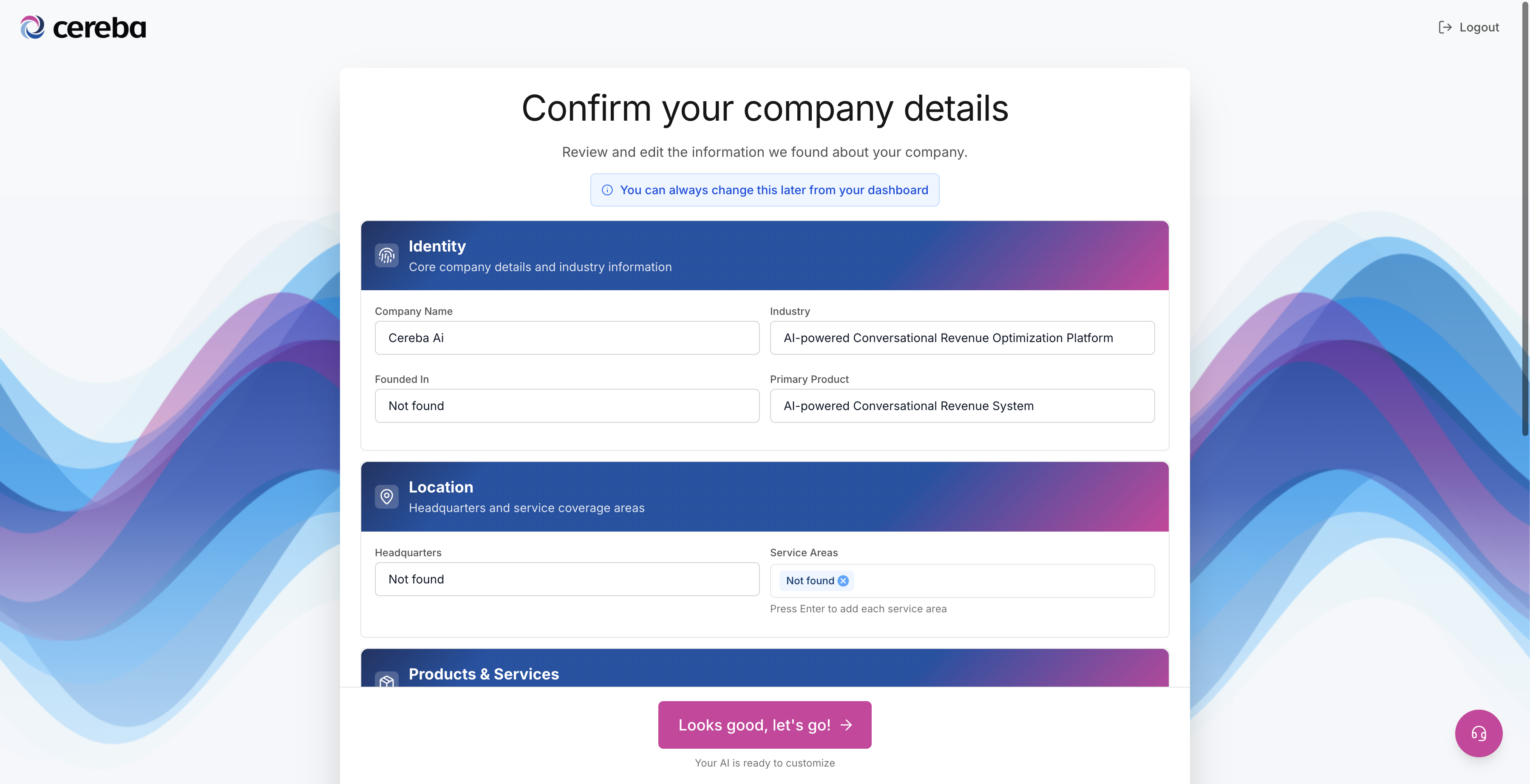

V1's onboarding asked users to define their business from a blank prompt. V2's surfaces what the system already knows (about the user, the account, the integration state) and asks for the smallest input needed to act. AI as product behavior, not chat wrapper.

The blank-prompt problem is the same one most B2B AI products still ship with: here's a text box, tell us what you want. C.O.R.E. Intent inverts it: here's what we recognize, confirm or correct it, then we move.

What V1 surfaced, V2 fixed

The GHL connect step was the conversion floor

V1's onboarding metrics were specific. Users got stuck at the GoHighLevel (GHL) connect step. That single step was the conversion floor, and onboarding around it was too long, too in-the-weeds, and forced upfront before users had seen what the product could do.

The V2 onboarding rewrite

- Shorter and handheld. Reduced step count, replaced free-form configuration with guided defaults the user could override

- Moved out of forced flow. Onboarding became a quick-setup guide inside the dashboard, not a wall users had to clear before reaching the product

- Product preview before paywall. Users see what they're getting before they're asked to pay for it

- GHL connection rewritten as a primary unblock target. The integration that used to be the conversion floor became one of the smoothest steps in the flow

The principle: V1 ran long enough in production to teach us what the product needed to win. V2 was where we built the win. That's the right ratio. V1 to surface the question, V2 to ship the answer.

Reflection

What worked, what I'd do differently, when this approach is wrong

What worked

- The judgment call. Rebuilding rather than retrofitting was right. It bought a year of forward velocity instead of a year of patching limits we already understood.

- The methodology developed during the rebuild. AI as thought partner, paired with traditional UX practice. The same approach I now use everywhere. It started here.

- One designer through both eras. The same person who saw V1's onboarding metrics led V2's rewrite. No translation loss between what the data said and what shipped.

What I'd do differently

- Surfaced the rebuild case earlier. By the time we made the call, V1 had carried more passengers than it should have. The signal was visible a few months before we acted on it.

- Instrumentation from day one of V2. V1 taught us what to look for. V2 should have started with the dashboards V1 ended with, not built them in afterward.

When this approach is wrong

Rebuild when the platform is the limit, not when the design is. If V1's problems had been UX problems, retrofitting would have been the right call. Rewrites are expensive and most of them don't need to happen. The trigger here was that the platform couldn't support what we wanted to build next, not that the screens were wrong.